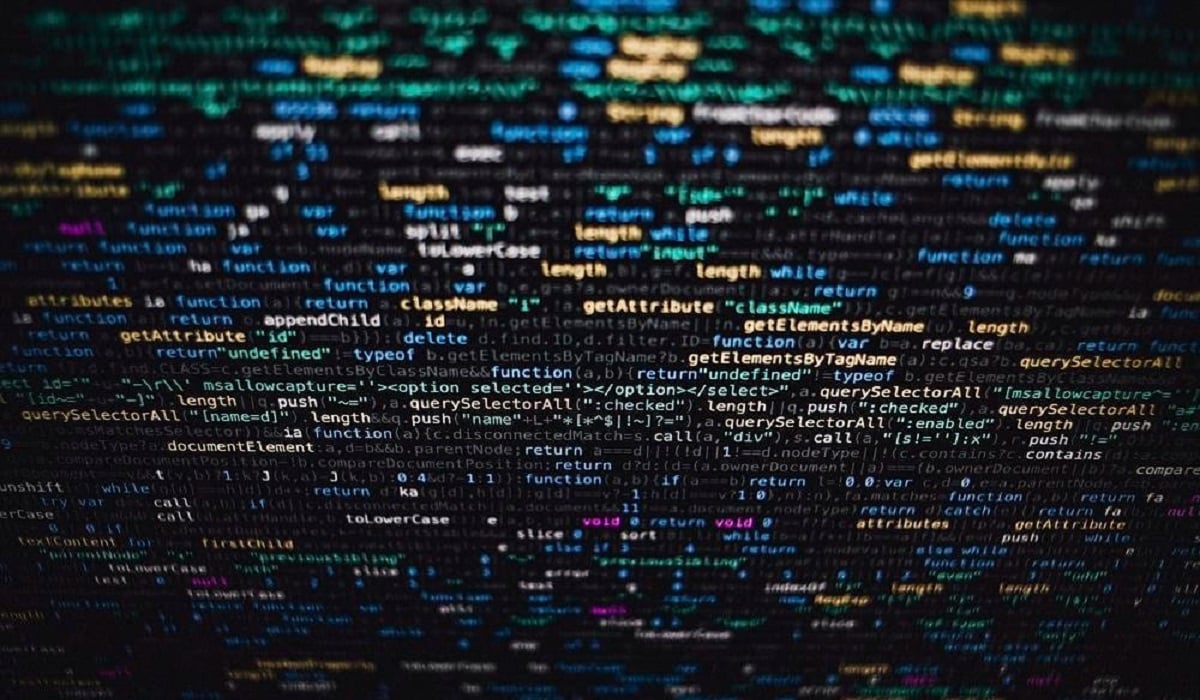

Before launching your scraping project, you want to understand the how a scraper works In this article, we'll show you step by step how it works!

Step 1: Sending the HTTP request

During the web scraping, the scraper usually starts with send an HTTP request (often GET type) to the URL of the pages you want to scrape.

To make the server think it is a «normal» browser, the scraper can include common HTTP headers. For example: a User Agent which mimics that of Chrome or Firefox, cookies...

👉 Basically, the scraper «pretends to be» a browser so you don't get blocked by the server!

Step 2: Receiving and analyzing HTML content

In response to the request, the site returns the HTML code of the page you are interested in. This code contains all the content visible on the web page (titles, text, images, links, prices, reviews, etc.).

It is important to note that the scraper does not «see» the page as a human does.

👉 What he does is «parse (read) the HTML structure to identify the elements that interest him.

Step 3: Data extraction

Once the code has been analyzed, the scraper targets the elements it wants to extract: article titles, product prices, etc.

To do this, the scraper relies on selection methods that allow it to identify the right tags in the code during web scraping. The goal is to sort through the code and to keep only useful data.

👉 The most common method is to use CSS selectors. These enable target specific elements according to their classes, identifiers, or hierarchy.

For example, a scraper analyzes a page on an e-commerce site. It finds the following HTML code:

<h1 class="product-title">Sports shoes</h1>

<span class="price">79,99 €</span>To retrieve these elements, the scraper uses CSS selectors:

- .product-title for the product title

- .price for the price

👉 Otherwise, to deal with more complex data structures (based on position, text, etc.), the scraper uses the method of XPath selection.

👉 Note that for dynamic sites that load their content with JavaScript, the scraper often needs to use an additional tool (a «headless browser») to be able to analyze the entire content.

Step 4: Data storage

When data is extracted, the scraper can save in different formats.

Depending on your needs, you can download the data :

- 📊 In a CSV file, which resembles an Excel spreadsheet,

- 🧩 In JSON, a more flexible format often used by developers,

- 📑 In a database, if the volume is significant.

You can then analyze, sort, view, or use the collected items as you see fit.

What is the role of a scraper?

A scraper refers to a bot or software that allows you to’automatically extract and store data during the web scraping process.

Thanks to powerful scrapers, such as those offered by Bright Data, you can collect prizes, articles, company data, and much more!

Here are some ideas for practical and relevant uses of a scraper:

- 🔍 Competitive intelligence : collecting product prices from competitors

- 📊 Market analysis: gathering information on trends

- 📰 Content aggregation: creation of news feeds

- 🧪 Scientific research: collection of public data for studies

How to scrape for free?

Do you have web scraping projects, but your budget is limited? Don't worry, some scrapers are available for free: software, extensions, or code libraries—there's something for every need.

You can use these free scraping tools to collect data efficiently and quickly.

Find out more in our article on the free web scraping !

What is the difference between an API and a scraper?

Both allow you to’extract data online, but with a few differences:

- 📌 APIs

These are specialized tools that a website makes available to collect information on its pages.

APIs thus enable collect data legally, but only on the website pages and only the information authorized by the site.

- 📌 Scrapers

Scrapers, on the other hand, allow you to web scraping on any website.

They also make it possible to collect without restriction all visible data!

We explain everything to you difference between APIs and scrapers in our article on the subject.

But to return to the how a scraper works, The instructions are therefore quite simple:

- 📡 Submit a request

- 🧩 Read the HTML pages to be scraped

- 📊 Extract data (using CSS or XPath)

- 💾 Store them in a useful format

Once you understand the steps, the web scraping will be a breeze for you! Otherwise, for beginners, you can scrape data with Excel. It's very simple and convenient, despite its limitations.

What about you? Do you know of any scrapers that work differently? Feel free to head to the comments section to share your experiences with these tools and web scraping!